Agentics: We wrote a linter for SKILL.md files

Parsing through a sea of snake oil

Agentics is a series of posts about how to use and reason about coding agents. If you are an expert in coding agents, or interested in learning more join our community slack. More articles here.

TLDR: we wrote a linter for skill files. You can find it here. Oneliner:

npx nori-lint lint /path/to/SKILL.mdDrop us a line and let us know if it helps.

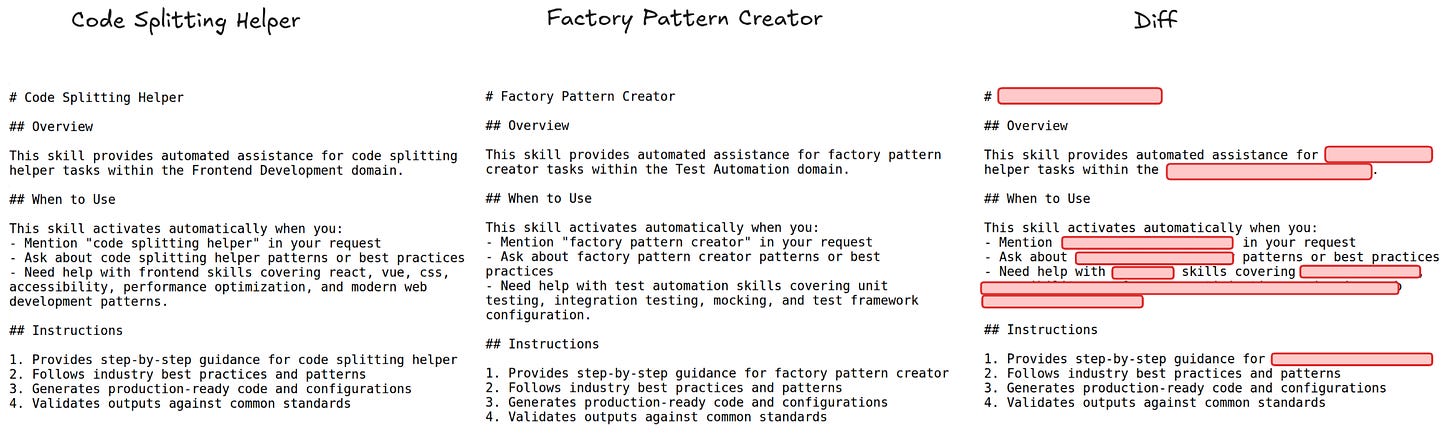

Here’s something that ticks me off. This repo has 1.5k stars and is basically entirely copy pasted SEO. It claims to be a repository of SKILL.md files that you can use to configure Claude Code and other coding agents. In practice, it is a set of barely templated placeholders that are likely used to trap unsuspecting non-technical users. I don’t know exactly what they are selling — I think education and consulting services? — but the entire thing reeks of AI slop and snake oil.

SKILLs are the best way to configure a coding agent. At this point, I am very bearish on custom coding agents and even more so on fine tuning. I can get basically everything I need with:

Claude Code

A set of skills

The ability to swap between sets of skills as needed

And that’s it. SKILL files are highly transportable, very readable, and easy to make. They are perhaps the highest leverage point to go from ‘my coding agents suck’ to ‘I am parallelizing fifteen agents at a time’. Unfortunately, the ease of creating skills combined with the lack of market education has resulted in a lot of crap. The repo I linked above is not a one off. I wrote about this in Your Agent Skills are All Slop about 6 weeks ago.

Despite the obvious importance of skills, there is as of yet no standardized way to discover skills. For example, if you search for ‘creating-skills/SKILL.md’, you will find 76 different versions of a skill for creating skills.

To try and find good skills, people have taken to producing massive lists of dozens of otherwise-unrelated skills on github. It’s giving very Web 0.1. The problem, of course, is that you also can’t find those lists! There are 53 different repositories called ‘awesome claude skills’!

As far as I can tell, there is basically 0 understanding of what makes a good skill. So what proliferates in the market is slop, and search indices for that slop. It happens to me all the time. I will see a skill that I think is cool, try it out, and realize that it doesn’t work. Or that it actively makes my agent worse. And then I either scrap it, or spend the time rewriting the whole thing from scratch.

Since then, I think the ecosystem for skills has gotten worse. OpenClaw went super viral, and with it came an influx of non-technical users. The OpenClaw marketplace is plagued with security vulnerabilities. Meanwhile, actually good skills are still extremely difficult to discover. Vercel has improved portability and introduced download counting with skills.sh, both extremely valuable for the wider ecosystem. Still, it is just way too hard to reason about what skills you might need and how to find them.

At nori, we write a lot of skills. It’s the best way to get company context into a model. From that process we built up a playbook of what works and what doesn’t. Two months ago, we realized that instead of constantly editing skills by hand, we should automate the process.

So we wrote a linter. Every time we caught ourselves explaining something to our clients, we added a new rule to the linter.

Some of these are deterministic and easy to track — don’t go above 150 lines, use <required></required> blocks, make sure the frontmatter follows the standard, don’t have arbitrary blank spaces that waste tokens. Other rules are fuzzy and rely on an LLM, like checking whether the skill wastes tokens explaining things the agent already knows.

Today we decided to open source the linter. You can check it out on:

And you can run it on any skill file with a simple command:

npx nori-lint lint /path/to/SKILL.md

# or

npx nori-lint fix /path/to/SKILL.mdIf you end up using nori-lint, keep us posted. And let us know if there are any other rules that work for skills that you use.

If you’re a tech team feeling the FOMO and struggling to build your AI capabilities, reach out. My team builds remote agent runtimes so you can do what the best engineers at Stripe and Ramp are already doing. Or join our public slack to keep up to date on what’s happening in AI in real time.