Agentics: Using Metacognition to Get A Model Upgrade

The industry has coalesced around a standard best practice for using coding agents. We're calling it SPACE development.

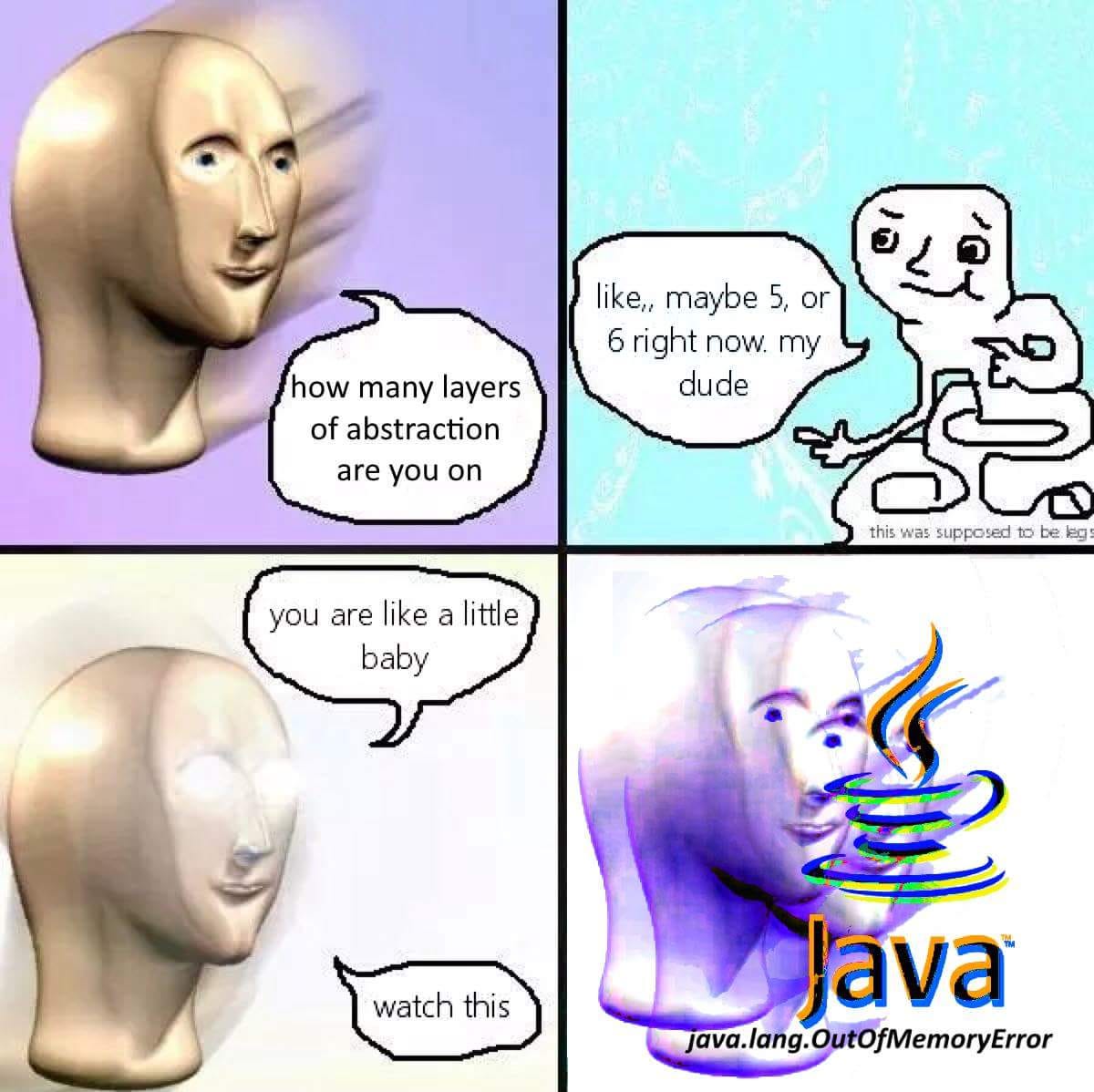

You need to teach your coding agents how to think. The agents know everything in the world. They know all the bash commands. They know everything about DNS records. You think you know kubectl? You are like a baby compared to how much the agents know kubectl.1 The agents know everything in the world. But they don’t know how to think.

It’s still early, but I think AI software devtools are increasingly coalescing around what I call SPACE Development. That is:

Search

Plan

Assert

Code

Evaluate

Folks may use different terminology, and may drop the assert step (to their detriment), but this basic core has come up over and over again. First you do research to learn more about the problem and pull in context. Then you write up a plan that explains how you aim to tackle the problem. Then you define a set of success criteria. Then you write your code. And finally you evaluate if your code met the success criteria.

SPACE Development has become best practice for building software with agents. I’m not the first to notice. Here’s Shaiyan Rais, who owns the claude-code-best-practices repo and who basically already coalesced the relevant research that I was planning to do:

All major workflows converge on the same architectural pattern: Research → Plan → Execute → Review

Shaiyan drops ‘assert’, but it’s there in all of the frameworks that use TDD, including Superpowers, Everything Claude Code, Matt Pocock Skills, etc.2

But notice that this is not really a strategy for writing software specifically. It’s a guide for how to think about certain kinds of problems. You can take the same basic SPACE pattern and apply it to everything from building a web game to creating a language compiler. And if you are flexible with the ‘code’ step — replace it with something more generic, like Execute3 — SPACE development becomes a general purpose hammer for a huge range of tasks.

The only way to land on something like SPACE Development is through metacognition — essentially, thinking about thinking. Every time you look at a process and think ‘how can I do this better?’ or ‘how can I formalize this?’ you’re doing some kind of metacognition. For example, throwing a basketball is just basic cognition. You’re just doing a thing. Thinking about how to flick your wrist to get backspin on the ball every time you shoot? That’s metacognition. You’re abstracting out from a particular instance of a thing — in this case, shooting hoops — to instead reason about the underlying process.

If agents are bad at thinking, they are totally incapable of thinking about thinking. So you need to do the metacognition for them. That means thinking about how to think through different kinds of problems, and then giving the agents the steps you land on. To poorly paraphrase, teach an agent to solve a single problem, and you’ll get a lot of slop to review; teach an agent to think, and you’re set for life and/or you’ve automated your job away (the analogy got away from me).

Mechanically, what this ends up looking like is a very particular style of AGENTS.md and SKILL.md files. Something like this:

<required>

1. Add all of the following steps to your TODO list.

2. Research how to best solve my question WITHOUT making code changes.

- Search for relevant skills using Glob/Grep in `{{skills_dir}}/`

- Use the WebFetch tool to search in parallel

3. Read and follow the writing-plans skill. Present plan to me and ask for feedback.

4. Read and follow the tdd skill.

5. Update docs.

6. Push a PR with your changes.

</required>The goal of these configs is not to provide context. Context is data, it lives on disk to be queried when needed. Rather, these configs describe processes that tell the agent how to make use of the context it has available. In my mind, there is a world of difference between the Superpowers brainstorming skill and the Anthropic PDF skill, even though they are both implemented as skill markdown files. The former is a thought process and the latter is just information.

Are there other kinds of thinking processes useful for software? Debugging comes to mind:

Add logs.

Replicate bug.

Read logs.

Change code.

Repeat.

Which is basically just a version of the scientific method (form hypothesis, test hypothesis, adjust hypothesis based on results).

Sometimes you get processes inside processes. For example, if you’re trying to build something really big, you might have SPACE Development as a subroutine.

Read large specification.

Identify small, actionable piece.

SPACE development on that small piece.

Update specification.

Repeat.

I could probably rattle off a dozen of these sorts of thinking patterns. I suspect the specific formulation doesn’t matter all that much. The larger point is that if you’re using an agent out of the box, it won’t have the awareness to do this sort of thing at all!

If you’re not the kind of person to invest a ton in configuration, pick up any of the open source LLM configs that encode SPACE development. We mentioned Superpowers from Jesse Vincent a few times here — it is really the OG LLM config set up in the space and is a great starting point. I’ve written about my own personal setup quite a bit, and have it hosted over on the nori skillsets registry.

What I wouldn’t do is try to build out a complicated mechanism for enforcing any explicit line of thinking. I have seen too many engineers fall into the tarpit of building complicated agent harnesses with complicated handoff scripts and, like, just don’t do it. See:

Even within the base providers, I’m very against “plan mode” as a concept. I’ve tried it and really don’t like it. I think that sort of forcing-the-model-into-a-box defeats the purpose of using a fuzzy general purpose machine.

Instead, teach the model how to think. It’ll take you ten minutes and a few dozen lines of markdown, and ends up being the equivalent of getting access to GPT-N+1 months before everyone else.

A lot of the above frameworks roll TDD into their ‘execute’ step.

SPAEE development didn’t have the same ring to it.