Tech Things: The Most Important Trial About Social Media Just Finished

The public is increasingly frustrated with social media and the gamification of our attention. Now terms like 'addiction' have entered the legal sphere.

When the cigarette and tobacco companies were dragged in front of courts around the country in the 90s on charges of public endangerment, they all played roughly the same line: “why would anyone keep using our product if it’s harmful?”

Given how things are in 2026, I think it’s fair to say that that line of reasoning didn’t really go that well for them.1

The big issue is that it turns out there are lots of reasons why people will do things that are harmful to themselves. This is not exactly a brand new idea, at least in the common-sense understanding of things. Alcoholism is such a well known issue that supposedly an entire ethnic group developed a genetic aversion to the stuff, and history is littered with temperance movements and the like. But all previous attempts at regulating addiction were framed as ethical, pseudo-religious necessities. God created the world, but he also created alcohol, or something, anyway no you can’t have whiskey.

The regulations that sprang out of the mid-to-late 90s were grounded in science, which was a bit new, and was only really possible because science had caught up to our intuitions. We could point to causal mechanisms of addiction. Those mechanisms drove choices that these companies purposely made. Those choices made their products (cigarettes) more harmful to end users. And that harm was the basis for liability. The tobacco companies ended up having to pay massive fines ($200+ billion), while state and local legislation effectively banned smoking in most public places.

As a result of that work — both educational and legislative — we have a simplified but very well understood language for addiction. There are substances that can make someone behave as if they weren’t themselves, and these substances should be regulated.

It is massively helpful to have this baseline understanding of addiction in the general consciousness. Before, people would say silly things like “anyone can quit cigarettes, they’re just lying if they say they can’t” (while taking a drag of their fourth cigarette before breakfast). The language of addiction changed this. By making it clear that addictive substances changed a person’s brain chemistry, it became way more acceptable to shift the conversation from personal responsibility to corporate liability.

Of course, the language of addiction is very simple, and as a result there are a whole bunch of addictive things that don’t fit neatly into the language above. For example, gambling. I think basically everyone knows on some gut level that gambling can really screw someone up. And there’s lots of research to back this up. Just to pick one out of a hat, fMRI scans show that gambling really can change brain chemistry. But it’s not, like, a ‘substance’. There isn’t any obvious ‘thing’ that is changing brain chemistry. It’s the action of gambling itself that is a problem. And this really breaks some people’s brains, because how can an action, or a concept, or an idea change your brain chemistry as much as some good ol’ C2H5OH? As a result, gambling is way less regulated, and has crept into all sorts of weird places. Sports betting, prediction markets, loot boxes, gacha games. A lot of these target minors explicitly, because it’s not ‘addiction’.

In a past life, in my molecular bio days, I spent a fair bit of time doing addiction research.2 When it comes to addiction, there isn’t any special requirement for something to be a ‘substance’. You can get addicted to drugs. You can get addicted to fatty foods and to coffee. You can get addicted to visual stimuli (porn), or physical actions (a wide range of eating disorders), or even toxic relationships. Everything we do ends up being translated to protein pathways and electrical signals. Sometimes that warps our brains. And sometimes, people build things that warp our brains intentionally.

Last week, one of the most important cases about social media regulation wrapped up. From NPR:

A California jury on Wednesday found that Meta and Google were to blame for the depression and anxiety of a woman who compulsively used social media as a small child, awarding her $6 million in a rare verdict holding Silicon Valley accountable for its role in fueling a youth mental health crisis.

While the financial punishment is miniscule for companies each worth trillions of dollars, the decision is still consequential. It represents the first time a jury has found that social media apps should be treated as defective products for being engineered to exploit the developing brains of kids and teenagers.

Lawyers for KGM argued that Instagram and YouTube were deliberately designed to be addictive and the companies knew the platforms were harming young people, while the tech companies countered that their services cannot be blamed for complex mental health issues.

KGM’s legal team showed the jury internal documents from Meta in which CEO Mark Zuckerberg and other executives described the company’s efforts to attract and keep kids and teens on its platforms. One document said: “If we wanna win big with teens, we must bring them in as tweens.” Another internal memo showed that 11-year-olds were four times as likely to keep coming back to Instagram, compared with competing apps, despite the platform requiring users to be at least 13 years old.

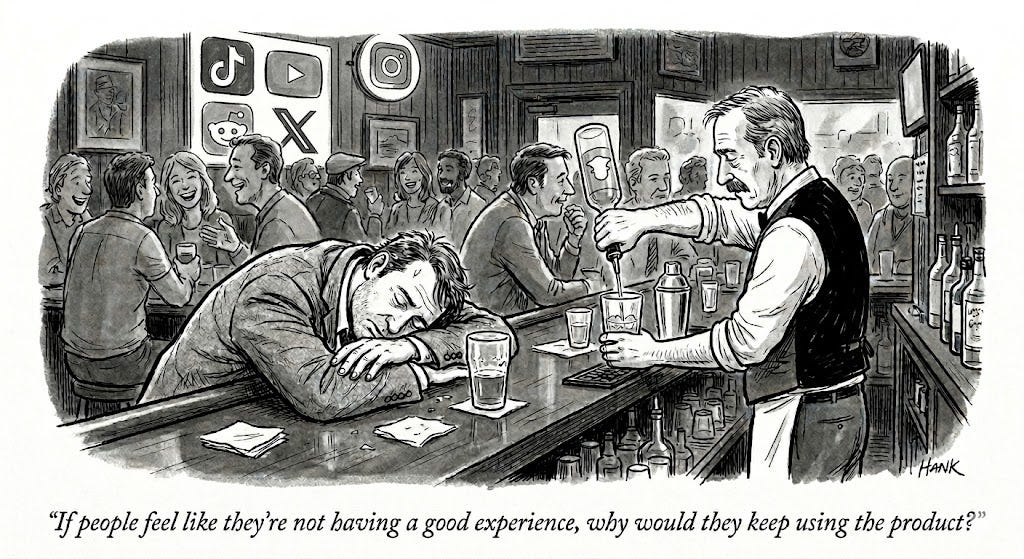

Under questioning about those documents, Zuckerberg told the jury that keeping young users safe has always been a company priority. “If people feel like they’re not having a good experience, why would they keep using the product?” Zuckerberg said.

I think there are a lot of things you could say about who is to blame for the plaintiffs social media consumption habits. You could argue personal responsibility. You could say that the parents should have been more involved — the plaintiff started on the apps when she was 6 (!!!). You could even say that schools and teachers and peers all played a role. But all of these assume that social media itself is, inherently, a risky thing, something that needs to be managed and controlled. It would be insane to claim that, actually, the 6yo using social media every waking minute of the day is totally fine because they are actually having a good experience.

But of course, this is all new legal ground. The case wasn’t supposed to get this far to begin with.

Historically, the big social media companies would avoid liability in these kinds of cases because of Section 230, the law that exempts digital platforms from liability caused by user-generated content. The argument goes something like this: the harms created on these platforms are entirely driven by the content posted by other people; it’s really sad that people become depressed when they see content posted by other people, and the platforms are responsible for monitoring and removing content that is actually illegal to post; but the platforms cannot be held liable when someone becomes depressed because of legal content posted by other people.

This is an extremely compelling argument. There are lots of sad things that happen, and it would be ridiculous to penalize Meta or Google for, e.g., reporting on wars or famines or deaths or whatever. I’ve often said that Meta in particular catches a lot of flak for problems that are really just human problems. People are messy, and complicated, and when you put a billion of them on the same platform you get an exponential explosion of ways things can go wrong. So in general, I think Section 230 is an incredible law, a great example of smart and forward thinking legislators creating the scaffolding for decades of technological, economic, and social progress.3 Judges and juries tend to agree, and have consistently ruled in favor of the big Internet platforms.

Still. There’s clearly a blurry line between “unopinionated information pipe” and “highly editorialized opinion platform.” The gap lies in product design — the millions of choices made that makes Twitter different from Facebook, Facebook different from Tiktok, and Tiktok different from YouTube.

It’s not hard to construct a hypothetical example where the product design is itself clearly harmful. For example, imagine you had a social media company with the ticker MEAT (no relation to any other company).

And imagine that 0.001% of posts on MEAT are really terrible — ai slop racist incitement to violence, that sorta thing. You might rightfully raise an eyebrow if MEAT exclusively showed those 0.001% of posts to everyone browsing their ‘For You’ page. You might raise two eyebrows if the company simply washed their hands of the matter, arguing that because all the content is technically user generated, they have no liability — even though documents show that the code literally has a line that says

if (post == unsettling) visibility = highIt always seemed silly to me to argue that the algorithms that power personal feeds and addictive dark patterns fall under “user generated content”. Legally it’s obviously a grey area, as are many things in the world of software. But just intuitively, obviously the design of a product is the output of a company! What else are all the patents for?

This is, essentially, what the defense argued too. They avoided content. They avoided user behavior. They avoided moderation. Instead, they stayed laser focused on product decisions and the internal discussions and documents that drove those product decisions. And they were able to show liability. Again, from NPR:

The verdict validated the plaintiff’s lawyers’ approach of shifting the legal target; instead of focusing on the content people see on social media, the case put the spotlight on how social media services were designed. Meta’s apps, including Instagram, and Google’s YouTube, the jury concluded, were deliberately built to be addictive and the companies’ executives knew this and failed to protect their youngest users.

Jury trials are famously swingy, so it’s hard to say whether the legal reasoning will stick. But jury trials are great barometers for how the public feels about a topic, and right now, I think the public vibes are rancid. It is conventional wisdom that many companies do try to hack our brains. Everyone in the valley is aware of engagement metrics. Everyone knows that optimizing for engagement works, even when doing so is harmful. Personally I’ve often felt like my phone is channeling some kind of demonic entity. When I have kids they are going to stay as far from phones as possible for as long as possible.4 Most people I know feel the same way. Just about everyone I know is taking steps to try and mitigate their screentime, and failing miserably.

So in the wake of this ruling, many people are cheering that this is finally a way to discourage the addictive patterns that have become endemic to digital life. Including, as it turns out, the jury itself!

Another juror, who gave her name only as Victoria, acknowledged that the jury wanted to send a message to the companies. “We wanted them to feel it,” she said. “We wanted them to realize this was unacceptable.”

But I think it is too easy to blame the big social media giants. Big fines might feel good,5 but they are a hammer when what we need is a scalpel — literally and figuratively the wrong tool for the job.

Imo, all of these companies are reacting to incentives. If Meta puts down its guns and makes their algorithms less effective, they lose users and revenue to Tiktok. If Tiktok puts down its guns and makes their algorithms less effective, they lose users and revenue to Twitter. And so on. This is the kind of coordination problem that government regulation is designed to solve. I think the real indictment lies with lawmakers who have been extremely slow and unwilling to take meaningful steps on these issues.

I have long advocated for a ‘disabled by default’ policy — algorithmic personalization should be disabled unless a user manually enables it.6 I like this approach because it increases user choice, giving more control back to the consumer to set guardrails on their own usage without outright paternalistic bans. I think only the most callous lobbyist would be against this proposal.

But such a solution still requires some kind of law, because again, no one is going to do this unilaterally. I worry that if we keep trying to use the judiciary as a solution here, we will inevitably break Section 230 — either by completely gutting it or expanding it too far.

Right now, Meta and Google have stated that they intend to appeal the ruling. I think this is the obvious thing to do. But I also think this is a missed opportunity stemming from a lack of creativity. In an alternate world, Meta and Google could pledge to do better and then work with lawmakers directly to craft laws that deescalate the attention war. That is a win for everyone — the companies don’t have to spend countless billions on a zero sum attention game, and consumers get their lives back. It also is likely a better outcome for the big tech platforms. Like I said above, the public is angry and frustrated. If the tech companies continue fighting tooth and nail, the end result is not that they will avoid regulation. Rather, like the tobacco companies before them, they will simply not be given a seat at the table.

Other things:

Delve was removed from the YC website. Delve was the main story of last week’s Tech Things, where we discussed the scandal and the fall out. I mentioned previously that the company was defacto dead regardless of whether it did or didn’t commit the fraud it is accused of committing. YC’s distancing all but confirms that.

OpenAI acquired TBPN. TBPN is a hilarious concept, a sports podcast for the tech world. It works because they take themselves super seriously, but also because it was obviously a joke. This was an especially interesting acquisition because TBPN is basically only big on Twitter. But that’s also where the entire tech world (unfortunately) seems to be. Generally these kinds of acquisitions don’t have great results for the acquired brand — it’s hard to keep up the same kind of edgy irreverant humor when big money gets involved. Curious to see what happens.

The Claude Code leak has been all over the news and I don’t have much interesting to say, except that I am very concerned about what this all means for open source. People took the CC leak and had an AI automatically clean-room the code. The argument is that this effectively removes the license. Sure, this feels fine when we’re looking at code from a multi-billion dollar company. But the same logic applies to any other open source library out there. If you can effectively delicense anything with AI, open source licenses as a regime will basically cease to exist. May write a more full post on this soon.

Everyone who founded xAI left. Probably does not bode well for grok? Setting aside my personal opinions of Elon, I really can’t tell whether he is still making good business decisions. I mean, sure, 10 years ago it was uncontroversial. Now? He has so much money that he’s about as insulated from the market as an engineer working deep in some middle management layer at a FAANG for 15 years. That said, even though I have been very bearish on Tesla self driving, their new models are actually good. It seems throwing enough compute after the problem is enough to overcome (some of) the limitations of not having lidar. So maybe this bet will also pay off? But then I’ve also been bearish on Twitter, and that clearly was correct? For better or worse, the man has created a little universe entirely to himself. A bet on any of those companies is really just a bet on the one guy.

We’re going to the moon!

The actual history is interesting and frustrating. The big tobacco companies often simply lied and denied the mounting scientific evidence that the poison product was harmful at all. This bought them ~30 years in friendly courts that were unwilling to block individual freedoms. It wasn’t until internal docs leaked in the 90s that it became impossible for the companies to flatly deny the harm.

we literally got zebrafish drunk to see what they would do, it’s amazing how easy it is to push the frontiers of knowledge forward

There have been growing efforts to get rid of it in recent years, driven in part by anger at the social media companies for the sorts of things that drove the lawsuit above. That faction is independently thrilled at this jury result, because they think it a step towards getting rid of 230. I think those efforts are misguided.

Mia: Yay! Agreed!

Though in this case, they weren’t really that big. Not sure how to interpret that.

You can imagine all sorts of additional corollaries to this, e.g. that companies cannot advertise to users that the algorithmic personalization exists to ensure we do not just see a proliferation of cookie-banner-like popups.

> If you can effectively delicense anything with AI, open source licenses as a regime will basically cease to exist. May write a more full post on this soon.

I’d be very interested in this.