Tech Things: Everyone's Getting Fired

AI comes for tech jobs. Security issues seem to be skyrocketing. General Catalyst Ad, Elon v OpenAI, Gamestop and eBay, Claude v Codex.

Everyone’s Getting Fired

It’s been over a year since I wrote this piece:

Back then, I wrote:

Assume that there are five senior engineers at a company. Each can produce 4k lines of code a month, or 20k total. And let’s say AI gets good enough that it can empower a single senior engineer to be 5x more productive.

There are two possible extremes here.

On one hand, the company could decide that it does not need the extra code. The primary company bottleneck is not feature dev or maintenance, so it just doesn’t need the extra coding power. In this world, the company holds the number of lines of code constant and gets rid of 4 of the 5 engineers.

On the other hand, the company may decide that it really needs all hands on deck. Maybe the company is a standard techco that needs to push out features as fast as possible. In this world, the company holds the number of engineers (that they can afford) constant, and increases the LoC output to 100k per month.

As with all things, the actual answer is likely in the middle somewhere. But notice that any setting of this knob that’s not the most extreme option — the one that sets the “lines of code” dial all the way to the max — results in someone being fired.

Well, AI has definitely gotten good enough that it can empower a single engineer to be 5x more productive. Naively, if you equate productivity multiples to the number of agents you have running at the same time, you may be able to get to 7-10x productivity (yes yes I know this is not a 1:1 measure of productivity improvement, it’s directionally correct). And companies have started setting the dial.

Meta:

Meta said on Thursday it plans to lay off roughly 10% of its workforce, or about 8,000 people, the latest in a string of tech industry layoffs fueled in part by artificial intelligence.

The company is also closing around 6,000 open roles, Janelle Gale, Meta’s chief people officer, wrote in a memo published by Bloomberg that Meta confirmed to CNN.

TD Cowen estimates the cuts hit between 20,000 and 30,000 positions, roughly 18% of Oracle’s global workforce of approximately 162,000 people. India was hit hardest, with approximately 12,000 employees terminated out of Oracle’s roughly 30,000 person Indian workforce. Affected workers included software engineers, account executives, program managers, and staff from Oracle Health, Sales, Cloud, Customer Success, and NetSuite.

today we’re making one of the hardest decisions in the history of our company: we’re reducing our organization by nearly half, from over 10,000 people to just under 6,000. that means over 4,000 of you are being asked to leave or entering into consultation.

Snap:

April 15 (Reuters) - Snap (SNAP.N), opens new tab will lay off about 1,000 employees, including 16% of full-time staff, the company said on Wednesday, becoming the latest tech firm to shift toward leaner teams as it ramps up AI adoption to streamline operations.

The move, which also includes the closure of more than 300 open roles, comes weeks after Irenic Capital Management pushed the Snapchat parent to optimize its portfolio and improve performance. The activist investor has an economic interest of about 2.5% in the company.

Cloudflare, which provides internet security and performance services to millions of websites worldwide, announced it was cutting its workforce by approximately 20%, which equates to 1,100 people, it said as part of its first quarter 2026 earnings report on Thursday.

Microsoft’s program announced on April 23, 2026 takes a different structural approach to the same underlying problem. Rather than involuntary terminations, Microsoft offered 8,750 U.S. employees — about 7% of its domestic workforce of approximately 125,000 — a voluntary separation package that includes 26 weeks of base pay, accelerated equity vesting and 12 months of healthcare. Acceptance window closes May 16, 2026. Internal estimates suggest Microsoft expects 60-70% of eligible employees to accept, implying actual departures of 5,250 to 6,125.

On Tuesday, crypto exchange platform Coinbase Global Inc (Nasdaq: COIN) announced it was laying off about 14% of its staff, or roughly 700 employees. As Fast Company previously reported, Coinbase CEO Brian Armstrong cited two factors for the layoffs.

The first was the recent volatility in crypto markets in general, which Armstrong said necessitated cost-cutting measures.

And the second factor? AI.

Four operational changes are part of the workforce reduction.

We’re reevaluating our operational footprint, and are planning to reduce the number of countries by up to 30% where we have small teams. We’ll continue serving customers in those markets through our partner network.

We’re planning to flatten the organization, removing up to three layers of management in some functions so leaders are closer to the work.

We’re re-organizing R&D to create roughly 60 smaller, more empowered teams with end-to-end ownership, nearly doubling the number of independent teams.

We’re rewiring internal processes with AI agents, automating the reviews, approvals, and handoffs to speed us up, and plan to right-size roles across the company to follow suit.

Most recently, Cisco:

Today we announced our Q3 FY26 earnings with record revenue of $15.8 billion, up 12 percent year over year, and double-digit top and bottom-line growth. The ELT and I could not be prouder of the growth you have all delivered for Cisco.

...

With this, we are making changes today that will result in the reduction of our overall workforce in Q4 by fewer than 4,000 jobs, representing less than 5 percent of our total employee base.

It feels like there is a new layoff announcement every week. The message is basically always the same: we’re making tons of money, AND AI is making people redundant. AI denialists like to point to COVID overhiring as the primary culprit behind these layoffs, but COVID was 6 years ago. At some point, you just need to catch up with the times.

From Claude:

As of May 10, 2026, there have been 179 layoff events impacting 113,863 workers in tech this year per the SkillSyncer tracker, while TrueUp counts 286 events and roughly 128,270 people impacted, averaging about 1,000 per day.

Yikes. AI specific roles (training models, optimizing GPUs, things like that) seem like they are still safe for now, but most everything else in tech is flat or down. Reskilling has hit the tech world; I know a bunch of SWEs who have started reading ML textbooks in their free time.

And I think it’s going to get worse from here.

AI tools are still new, and I wouldn’t say most of these companies are particularly good at using the AI tools they have at their disposal. As a particularly silly / egregious example, Meta has been evaluating AI usage based on number of tokens spent per employee.

Meta is taking token usage into account during perf reviews. A current engineering manager at the social media giant told me that the token usage of each engineer is now a data point — one of many! — for performance calibrations. By itself, it is not a positive or negative signal, but someone perceived as having low impact and with low token usage is now seen as a blatant low performer. For high performers with outstanding impact, very high token usage is seen as a good thing as it conveys to the manager group that they’re personally invested in AI and are improving their workflow – as proved by results.

Friends of mine at Meta talk about how they have agents that are literally just talking to each other during the day to ensure they can ‘tokenmaxx’, because that is obviously what is going to happen when you tie performance to total token usage. But I can’t really blame Meta. Their tokenmaxxing policy is a sledgehammer because the tech industry as a whole has not yet figured out how to use scalpels. It speaks volumes that, even with their inefficiencies, Meta is still laying off so many people.

[EDIT: While I was writing this draft, arstechnica released an article about Amazon doing the same thing:

Some employees said colleagues were using the software to automate additional, unnecessary AI activity to increase their consumption of tokens—units of data processed by models.

They said the move reflected pressure to adopt the technology after Amazon introduced targets for more than 80 percent of developers to use AI each week, and earlier this year began tracking AI token consumption on internal leader boards

These are not exactly subtle policies!]

The one silver lining is that it is easier than ever to start a company. Back to the Meditations piece:

So to recap, a lower barrier to entry in the video game industry meant:

Massive increase in quantity and variety of output;

A shift in viable business structures;

An increase in the importance of taste and curation

…

Hopefully the parallels are obvious.

AI makes it easier for everyone to code. We should expect that this will dramatically increase the quantity and variety of software, enable new business and funding structures, and increase the importance of curation.

Anecdotally, more and more friends are going the startup route, many of them bootstrapping instead of trying for VC funds. Still, startups are not exactly known for being cushy cuddly jobs. The era of the noon-to-3pm tech worker is over.

Everyone’s Getting Hacked

Speaking of things that happen every week, have you noticed the uptick in major hacks, leaks, and outages?

We’ve identified a security incident that involved unauthorized access to certain internal Vercel systems. We are actively investigating, and we have engaged incident response experts to help investigate and remediate. We have notified law enforcement and will update this page as the investigation progresses.

…

The incident originated with a compromise of Context.ai, a third-party AI tool used by a Vercel employee. The attacker used that access to take over the employee’s individual Vercel Google Workspace account, which enabled them to gain access to that employee’s Vercel account. From there, they were able to pivot into a Vercel environment, and subsequently maneuvered through systems to enumerate and decrypt non-sensitive environment variables.

In early May 2026, Canvas LMS, a learning management system operated by private company Instructure, was affected by a data breach and outage.[1] Instructure disclosed that it was investigating a cybersecurity incident involving certain user data, including names, email addresses, student ID numbers, and messages among users.

…

The incident came to wider public attention on May 7 at approximately 1:20 p.m. PDT (UTC-7), when students began posting screenshots of the defaced Canvas log-in page on Reddit. On May 11, Instructure issued an apology for their lack of transparency on their incident update page. In that statement, they claimed to have reached an agreement with "the unauthorized actor" and that the compromised data was destroyed. The terms of the agreement are not publicly known, but unconfirmed rumors suggest that US$10 million was paid.

Stryker is responding to a global network disruption to our Microsoft environment as a result of a cyber attack. We have no indication of ransomware or malware and believe the incident is contained.

Our teams are working to understand the full impact to our internal environment and while we continue to investigate, below are responses to some of your inquiries:

ShinyHunters in particular has been in the news a lot, hitting PaneraBread, Figure Tech, RockStar, Canvas (above), McGraw Hill, Aura, and the EU, among others.

And then there were a bunch of zerodays that were dropped, like CopyFail:

If your kernel was built between 2017 and the patch — which covers essentially every mainstream Linux distribution — you’re in scope.

Copy Fail requires only an unprivileged local user account — no network access, no kernel debugging features, no pre-installed primitives. The kernel crypto API (AF_ALG) ships enabled in essentially every mainstream distro’s default config, so the entire 2017 → patch window is in play out of the box.

Or BlueHammer:

On April 3rd, 2026, a security researcher operating under the alias “Chaotic Eclipse” dropped a fully functional Windows local privilege escalation exploit on GitHub - no coordinated disclosure, no CVE, no patch. Just working exploit code and a pointed message to Microsoft’s Security Response Center: “I was not bluffing Microsoft, and I’m doing it again.”

Coding agents are really good at blasting things at a wall and seeing what sticks. This is, for the most part, a pretty bad way of actually writing code that needs to work well. If you do this too much you’ll end up with a massive spaghetti codebase that is as hard to maintain as it is to secure. But the same strategy is a fantastic way to find unexpected security vulnerabilities in existing code bases. Coding agents can relentlessly attack systems at scale, trying to find every crack and crevice imaginable.

This has some upsides. Open source maintainers are reporting that the number of real bugs and vulns being reported is higher than its ever been. Here’s Greg Kroah-Hartman, the ‘Linux kernel czar’:

Months ago, we were getting what we called ‘AI slop,’ AI-generated security reports that were obviously wrong or low quality. It was kind of funny. It didn’t really worry us.

Something happened a month ago, and the world switched. Now we have real reports. All open source projects have real reports that are made with AI, but they’re good, and they’re real.

But it also has downsides, as state sponsored actors start throwing their token budgets at unsuspecting codebases. The result is something of a race: who can spend the most tokens? Drew Breunig calls this ‘proof of work’ (in reference to the bitcoin trustless consensus mechanism of the same name):

This chart suggests an interesting security economy: to harden a system we need to spend more tokens discovering exploits than attackers spend exploiting them.

…

If AI continues to find exploits so long as you keep throwing money at it, security is reduced to a brutally simple equation: to harden a system you need to spend more tokens discovering exploits than attackers will spend exploiting them.

You don’t get points for being clever. You win by paying more. It is a system that echoes cryptocurrency’s proof of work system, where success is tied to raw computational work. It’s a low temperature lottery: buy the tokens, maybe you find an exploit. Hopefully you keep trying longer than your attackers.

I more or less agree that this is where security is going, and in some sense all software. Increasingly differentiation comes from sheer effort and hours over ingenuity.

But I disagree that security ends up being just a simple tokens in vs tokens out calculation. There’s a massive asymmetry here, the attacker has a huge advantage.

Imagine you were building, like, a house. There’s basically only a few ways to actually get this right. You have to build a few walls and a roof and at least one door in order to claim success.

But there are infinite ways to get this totally wrong. You could forget the door. You could put the ceiling on upside down. You could build the whole thing with sand and have it blow away in the wind. You could accidentally build a water slide. There’s basically no limit to how creatively you can screw up.

Same with software. Secure software needs to cover all bases. Searching for security vulnerabilities, on the other hand, is a bit like finding a girlfriend or trying to get a job — you only need one. A world of cheap, easily accessible AI may not lead to bio risk, but it could certainly lead to ransomware hell.

Of course, I have to mention Claude Mythos. The latest model from Anthropic has been deemed so good at finding security vulnerabilities that the Anthropic team decided not to release it to the general public until a few select software maintainers had a chance to use it to debug their systems. Anthropic haters have roundly condemned this move as mere advertising and theatrics. OpenAI did the same “too dangerous to release” song and dance for the awesome, world ending AI that was GPT-2.

Due to concerns about large language models being used to generate deceptive, biased, or abusive language at scale, we are only releasing a much smaller version of GPT-2 along with sampling code. We are not releasing the dataset, training code, or GPT-2 model weights. Nearly a year ago we wrote in the OpenAI Charter: “we expect that safety and security concerns will reduce our traditional publishing in the future, while increasing the importance of sharing safety, policy, and standards research,” and we see this current work as potentially representing the early beginnings of such concerns, which we expect may grow over time.

I’m poking fun a bit here, but it’s worth noting that OpenAI’s concerns were actually pretty spot on. In the years since GPT-2 released, we’ve been absolutely flooded by AI slop that has pulled our collective ability to understand reality apart at the seams.

Seems like Mythos is much the same — it actually is pretty good at finding vulnerabilities. See XBOW:

About two months ago, Anthropic invited us to help them assess the capability of a new model they thought represented a significant shift in capability. So we put it through our security gauntlet. Benchmarks, workflows, interactive use, and integrations.

Today, we can finally share details on how we tested Mythos Preview, what we found, and what it means.

Spoilers: This model is a major advance. It is substantially better than prior models at finding vulnerability candidates, especially when source code is available. It communicates with unusual technical precision, reasons well about code, and shows strong promise in complex domains such as native-code analysis and reverse engineering.

or AISI:

The AI Security Institute (AISI) conducted evaluations of Anthropic’s Claude Mythos Preview (announced on 7th April) to assess its cybersecurity capabilities. Our results show that Mythos Preview represents a step up over previous frontier models in a landscape where cyber performance was already rapidly improving.

(Though Dan Stenberg, the maintainer of curl, is mostly unimpressed)

It’s worth noting that many of those same Mythos skeptics also claim that GPT 5.5 is as good at finding security vulnerabilities as Mythos and is publicly available. So…ha, I guess? Somehow this doesn’t really make me feel better about the state of software security!

Unfortunately I think our regulators are basically not taking any of this seriously. The only politician who seems at all AI pilled is Bernie (who keeps putting out videos where he directly quotes AI CEOs about how horrible AI will be, and then goes “see?!”). No one else has really picked up the ball.

I suspect we’re going to see a lot more hacks and discovered zero days for the next year or so, after which things will hopefully mostly stabilize. But it will take that long to adjust to the new normal, especially because state run hacker groups are probably better at AI enablement than, like, the First Bank of Idaho.

Other Things

General Catalyst Ad

The VC world is often fairly insulated from macro economic pressure. It operates in such a strange niche of the market, with such skewed returns, that most things that impact other asset classes just kinda slide right over. The one thing that matters to every VC I’ve ever spoken to, often more than anything else, is deal flow. VCs need to be able to see deals to do their job, which in turn means that they need to have a steady drumbeat of founders knocking on their door. You could basically view everything else about VC through this lens. Presentations, advertising, social media positioning — it’s all a way to get and maintain deal flow.

General Catalyst is one of the largest VCs in the world. They just posted an ad in which they explicitly criticize other VCs for investing in immoral companies, stating that they have a high bar for ethics in who they invest in (the ad is clearly taking the piss out of a16z. The guy on the left is meant to look like Marc Andreesen. Seems like it got under their skin, I’ve seen 45 quote tweets from Marc himself already 😬).

I think the right way to read this is that VCs are starting to realize just how much the vice signalling ‘we will invest in anything’ approach has hurt their reputations. I know a ton of founders who keep lists of VCs that they refuse to work with because of their past behavior and investments. Looks like that has finally hit mainstream.

Most founders care a lot about the world, their communities, their employees. They are conscientious and ethical people. But many worry about speaking out about things they find unsavory, because Silicon Valley is a small world with some, uh, famously thin skinned individuals wielding a lot of influence. Still, I don’t think most founders realize just how much power they have as a result of the need for deal flow. A few folks publicly voicing their discontent can move mountains.

Musk vs. Altman

Elon donated a bunch of money to OpenAI when it was a non-profit. Then OpenAI became a for-profit. Elon is mad about this. He claims to be mad about this because OpenAI “stole a charity,” i.e. misleading him about the nature of his donations. But it’s reasonable to be somewhat suspicious of this claim, since Elon is also running a direct competitor to OpenAI that stands to gain quite a bit if OpenAI is taken down a peg.

Anyway, Elon sued. I am so glad that I live in a country where court cases are public, because the trial has been hilarious so far.

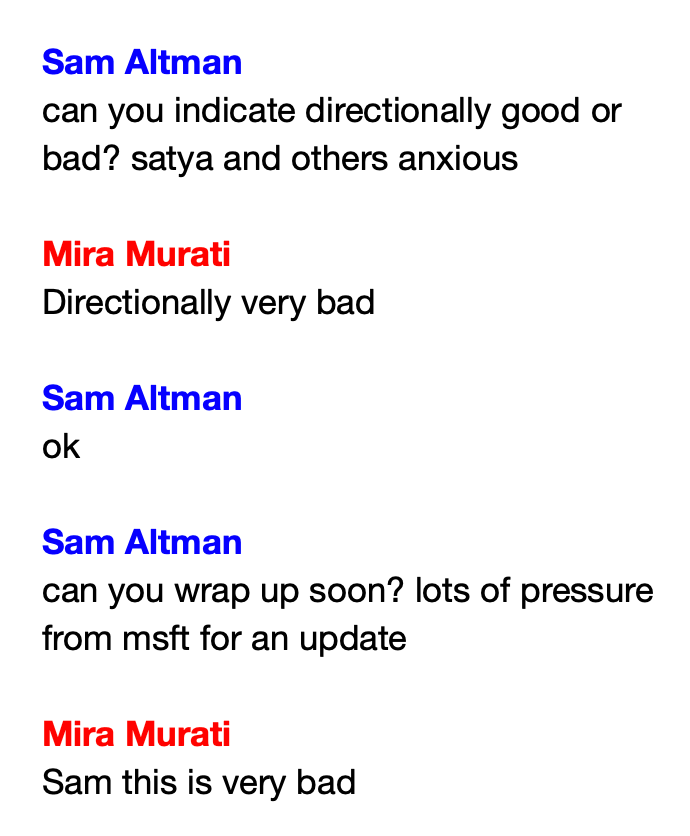

On the Elon side, it’s true that OpenAI changed the company to a for profit, and it’s true that Elon is mad about it, but it seems like he’s mad mostly because he wanted to make the company a for profit first — specifically, by moving it under Tesla with himself as CEO. On the OpenAI side, both Altman and CTO Greg Brockman argued that they were not trying to steal the charity, until Brockman’s personal diary was entered into evidence where he was like “wow, can’t believe we’re going to steal this charity.” We also finally got some color on the whole Sam Altman ouster thing, thanks to the incredible Internal Tech Emails account:

Other silliness abounds. Excited to see what else turns up.

Gamestop and eBay

Gamestop, a company worth $10b, made a $55b acquisition offer for eBay, a company worth $50b. You may notice that this math does not math. From BBC:

The cash and stock offer values eBay at $125 a share, $20 more than the shares were valued at the close of New York trading on Friday, GameStop said in a statement.

In a letter to eBay, GameStop’s chief executive Ryan Cohen said he planned to make $2bn of cost savings at the firm within a year of the deal being completed.

…

GameStop, which currently has a stock market valuation of around $11.9bn, said it has a commitment letter from TD Securities to provide around $20bn in debt to help finance the deal.

…

Shares in eBay jumped by more than 13% in after-hours trading when news of the potential offer emerged on Friday.

Though many of GameStop has closed many of its stores in recent years, it still has around 1,600 outlets in the US.

Those shops would give eBay a national network for its “live commerce” and other business operations, Cohen said.

I…guess? like, if I squint, I can just about see the contours of a business that uses local gamestop brick and mortars as the equivalent of amazon fulfillment hubs, but for decentralized users?

But also, the entire economic engine of the last quarter century or so was ‘take thing that was offline and put it online’. Now we’re, what, taking things online and putting them back in person? Delivery logistics are certainly annoying but surely people can wait an extra few days to have their limited edition lemon juicer shipped last mile to their house vs getting in a car and going to the nearest gamestop (???) to pick it up.

Anyway, eBay said no in the most devastating way possible:

EBay Inc. rejected a $56 billion takeover offer from GameStop Corp. Chief Executive Officer Ryan Cohen, describing the unsolicited bid as “neither credible nor attractive.”

Ouch.

Apparently Cohen is unfazed.

In the letter to eBay Chairman Paul Pressler, Cohen emphasized that eBay shareholders deserved a say on his proposal and went on to criticize top management’s compensation.

“They should not dismiss a $125 per share proposal without engaging on its substance,” he wrote, adding: “The economics are clear and they are public. eBay’s own shareholders deserve the opportunity to evaluate them.”

But the whole point is that the eBay board said there is no substance. Anyway, given how everything else is going, I expect Gamestop to be running eBay by the end of the quarter.

Opus 4.7

At this point, I can pretty confidently say that Opus 4.7 is a miss. It seems worse than Opus 4.6 and much worse than Codex. The team has basically entirely switched to one of those instead of using Opus 4.7 for their main work. Hope Anthropic fixes the issues in their next release.

I don’t doubt that AI is making a lot of programmers more productive, sometimes amazingly so. But the company where I work (Bill.com) just announced 30% layoffs (about 600 jobs cut) with a bunch of AI-related justification and, let me tell ya, this company does not know how to use AI. We haven’t even had access to Claude this whole time. Basically their AI strategy has amounted to “fine, fine, if you must use AI, here’s Amazon Q/Kiro, use it, I guess”. No orchestration or coordination between engineers, no investment in internal tool sharing or integrations, nothing like that. But now we’re apparently going “AI Native” and cutting 30% of jobs so we can “move faster,” blah blah blah.

The real story is that the CEO wants to sell the company and the activist investors want to get a return on their investment, so they’re trying to gut the company’s costs. They only want to make the balance sheet look as good as possible to prospective buyers, nothing else.

At the same time they announced these layoffs, they announced a $1 BILLION stock buyback. I don’t know much about finance, but for a company with a $4 billion market cap, that seems like a lot of money to spend on your own stock.

Anyway, if you need an example of a company saying they’re making an AI related cut, but it’s very very obviously not about AI, there ya go.

From what it appears, companies are using AI as a cover for moving jobs from the US to India. Trump jacked up the fee for H1-b visas and played games with tariffs. That combined with a change in the US tax law regarding R&D work seems to have spooked businesses into moving development out of the US. As long as the company also cites AI for the US job losses, the company will get a good stock boost too.

India is one of the obvious beneficiaries. Turns out, they can also tell AI to write code. The resulting code AI produces is exactly the same as if it were produced in the US. Go figure. Companies get the exact same AI generated code for a fraction of the price that American engineers demand.