Agentics: AI enablement requires managed agent runtimes

AI enablement in enterprise requires nothing less than fully managed agent runtimes

My mom called me over the weekend. Normally when mom calls, it’s to lovingly tell me to throw out my entire wardrobe or to ask when I’m going to buy her a penthouse. She does this ~3 times a week. Typical mom things. Imagine my surprise when I pick up the phone and the first thing out of her mouth is “actually I’m going to call you on Whatsapp video because Claude Code isn’t working.” And then for the next hour I get my mom setup on Claude Code through a shaky horizontal phone video stream, guest starring Dad as the camera man. Apparently mom’s boss’s boss’s boss’s boss announced a company wide mandate that everyone had to install and use Claude Code, and my mom had to figure out how to make the thing work in Windows powershell. It’s official guys, AI has hit the mainstream.

The actual install was pretty straightforward.1 Took about 5 minutes. Explaining how to configure the thing took another 55. “Most of your configuration should be in SKILL files, which need to live in the skills directory. Also you need a CLAUDE.md file, which is basically like a SKILL file but it gets added to every prompt…what do I mean by that? No skills don’t actually get added to the prompt, the agent has to choose to read those, the skill descriptions get added to the system prompt. No they don’t exactly get added to the CLAUDE.md, but they kinda do, they are both part of the system prompt…ok yes subagents are a different thing than skills, but slash commands are the same thing as skills. But it’s all markdown. No, subagents also get access to your CLAUDE.md and your skills. But that also gets added to the CLAUDE.md. Also you have different sets of these possibly from every folder. Some of these will live in your git repo. No the agent won’t pick up the ones in the git repo automatically unless you copy the files in the right place. What’s a git repo? uh…”

This shit is way too hard and way too unintuitive.

The problem is that there isn’t any stability. The macro environment is constantly changing, with Anthropic et al shipping new foot guns basically daily. Here’s a great example. Claude Code uses CLAUDE.md. Codex CLI uses AGENTS.md. Gemini uses both AGENTS.md and GEMINI.md. Most people have, at this point, switched to AGENTS.md support for standardization. But Claude Code, the industry leader for this sort of thing and the one that every CEO seems to insist on using, forces it’s own standard.2

And it is just way too easy for a given user to totally screw their environment up. “Claude, add a skill to automatically load my AWS credentials” and now you have a security leak that in two months will take out all the data centers in Wisconsin, whoops.

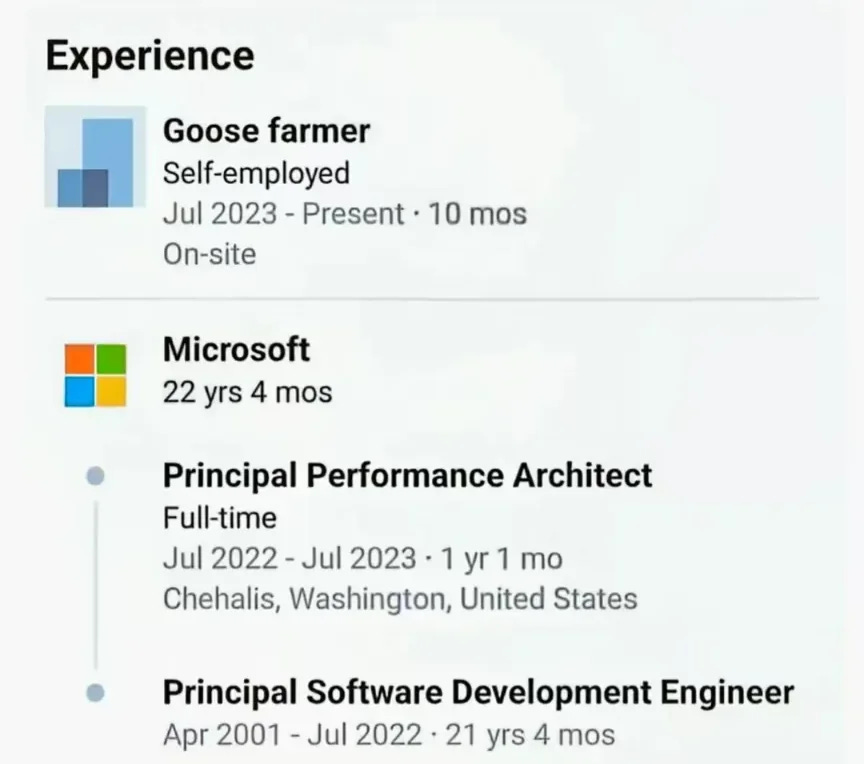

I can’t imagine being a TL in this setting. Every TL I know eventually reaches for some kind of stable cloud dev box environment, because debugging someone’s python env by spending 4 hours over their shoulder is a great way to want to throw your computer into a lake and take up a career in goose farming.

AI has pushed everyone back to their local boxes. So now you have all the usual dependency problems, but also you have a new batch of AI weirdness. Raise your hand if you have had someone complain about Claude “getting way worse”, only to discover that they have 50000 tokens in their CLAUDE.md and another 20000 tokens used up by random mcp server tools.

I think the ecosystem makes this unnecessarily harder too. It is too easy to download random skills from external repos. There is no curation, and every agent is capable enough to make everyone dangerous. We saw this in action a few months ago with all the OpenClaw leaks. As I said before:

Meanwhile, internal teams are still sending around config files through slack, which is only marginally more efficient than walking over to your buddy’s place with a USB drive. The lack of team-wide organization has made it virtually impossible to effectively distribute something to a team larger than 8.

Taking a step back, many people have written about how AI has been consumer first. Quoting Karpathy:

Transformative technologies usually follow a top-down diffusion path: originating in government or military contexts, passing through corporations, and eventually reaching individuals - think electricity, cryptography, computers, flight, the internet, or GPS. This progression feels intuitive, new and powerful technologies are usually scarce, capital-intensive, and their use requires specialized technical expertise in the early stages.

So it strikes me as quite unique and remarkable that LLMs display a dramatic reversal of this pattern - they generate disproportionate benefit for regular people, while their impact is a lot more muted and lagging in corporations and governments

But that means the enterprise ecosystem is uniquely underdeveloped despite the massive demand. Everything that’s been released has really been targeted towards consumers, hobbyists, and tinkerers. I think it’s great that tinkerers can configure things to their taste, but that is zero help for someone like my mom who just wants some kind of admin controlled environment that has everything set up already. Her area of expertise is not dev tools! Having her set up a ton of dev tools and integrations and whatever else wastes her time and wastes the company time. If your sales people are doing the equivalent of managing the company VPN, something has gone horribly wrong.

I think there’s a big gap in the market for managed agent runtimes (also known as ‘background agents’ because they can run in the background on the cloud). Everything else — org level skills, for eg — is just a band-aid. I’ve said in the past that for coding agents, the filesystem is the config. That means that you have to control the entire filesystem to get a consistent (and secure!) experience. It is simply not enough to assume that everyone in the org will learn not just how to use AI, but also how to use a CLI and how to use Bash and how to use git and how to use the million other tools that are required to get a coding agent off the ground. And even that may not be enough. One of our customers, a CTO of a highly technical eng team, was saying earlier today that any time he makes a change to an agent system prompt:

I need to take ten calls just to make sure everyone is on the same page, and for the more junior engineers those will be video calls. Just a ton of work for what is potentially a single line change to adapt to a new agent like Opus 4.7 or whatever.

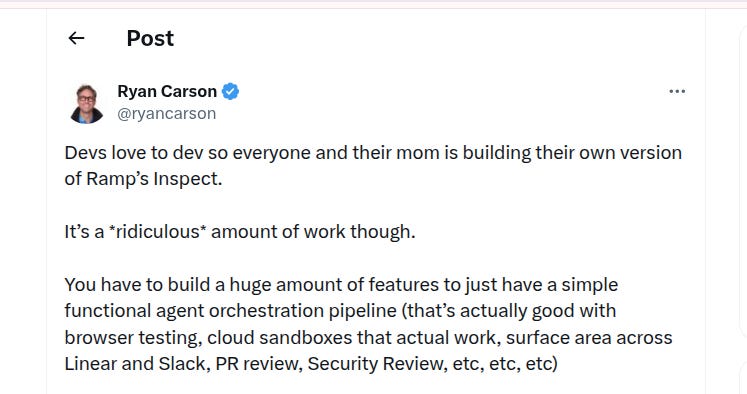

So far, the labs have totally dropped the ball on this. I kinda understand why — OpenAI and Anthropic make money off tokens, so they do not want to provide configurable environments that would allow someone to switch to their competitor’s models. But that just leaves the ecosystem wanting for a better solution. Large corps recognize the demand and have started building their own in-house solutions, including Ramp, Stripe, Spotify, Uber, Shopify, Block, and Jane Street (which, as usual, built theirs in ocaml). Each of these companies has teams of 10+ senior engineers developing this infrastructure while also leveraging years of pre-existing bespoke infrastructure and standardization. This is totally non-tenable for most series C-and-lower tech companies, not to mention all of the valuable companies that do not have this kind of software expertise in house.

More solutions are starting to pop up.

For folks who want to roll their own software, Vercel and LangChain recently open sourced background agent repos (along with a few others), though there are surprisingly few good tutorials out there for setting this sort of thing up internally. I have a conflict of interest here (see below), but in my experience the lack of easy walk-throughs is precisely because this shit is actually really hard to roll out. To get real adoption you need a real product with real polish, and that’s before you get into infra management and security and integration hell. All of that in turn requires a fair bit of maintenance to keep operating smoothly, especially if you’re mostly looking for AI enablement among non technical or semi technical teams. The product surface area is huge.

I think most teams should go for a fully managed service. Devin has been in the space for a long time. I think Twill is a relative newcomer that also does something like this? But there aren’t a ton of other services that actually follow the Ramp-Inspect / Stripe-Minions model. So when we ran into this problem ourselves, we weren’t happy with most of the other options out there and set out to build our own, which we now sell as an off the shelf option with a lot of customization flexibility (if you’re a ~Series A to ~Series D exec with a lot of AI FOMO, and you’re thinking about building your own background agents, reach out).

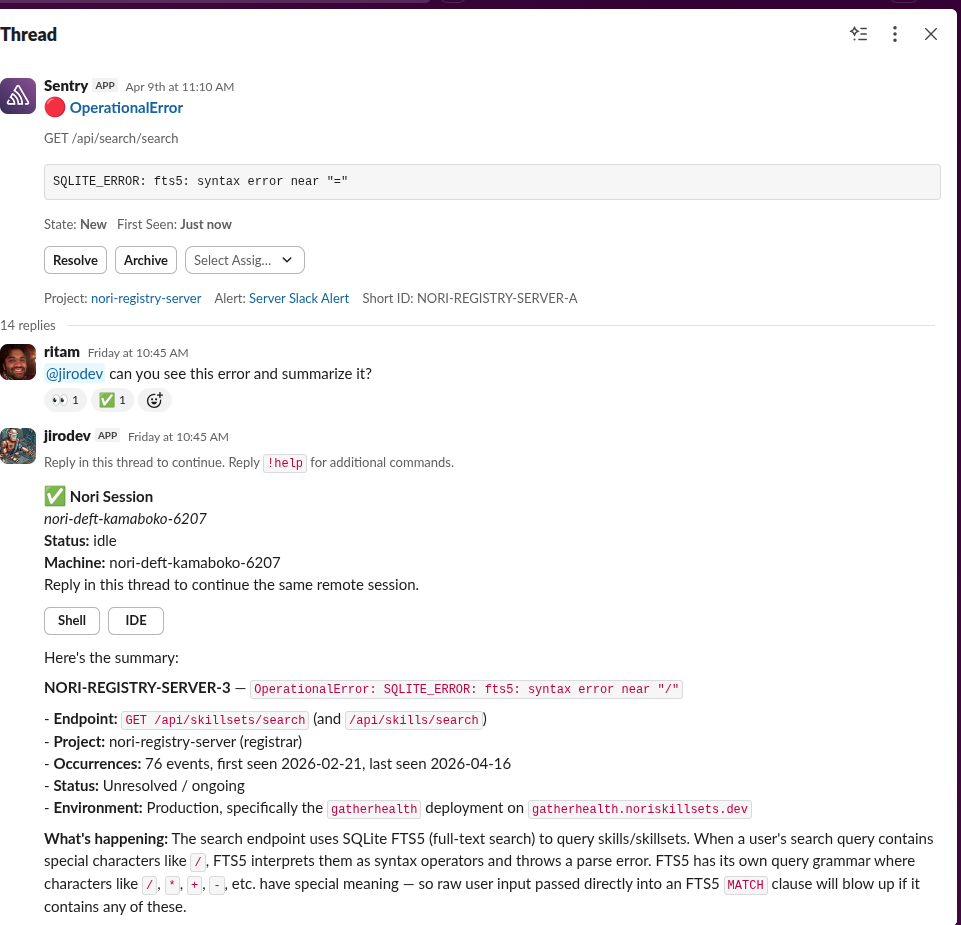

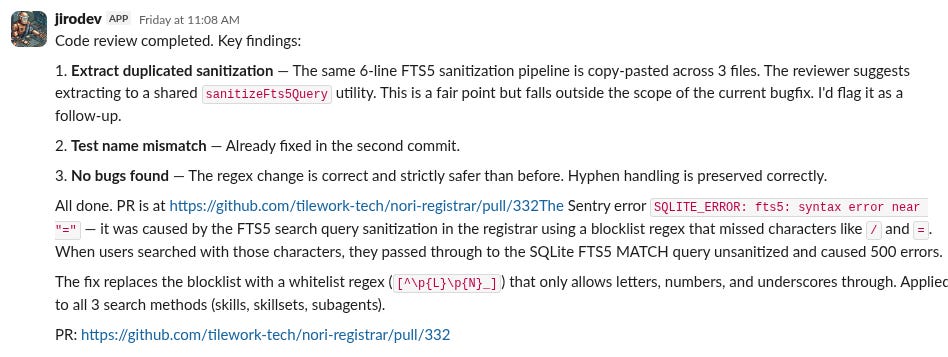

I have to say, having a tool like this is pretty incredible, and I’m really glad we built it. More than 30% of our PRs are shipped entirely through slack, and we have all sorts of really cool automations for things like bug triage to newsletter writing. But all of that takes a lot of maintenance, and we spend literally all day just thinking about how to make this one thing awesome, and it would be impossible to do that if we were building in, like, healthcare or manufacturing instead.

Of course, the difficulty of building and maintaining the product doesn’t absolve the need for something like it to exist. I think if you are an exec who is thinking about how to get your team to use AI effectively, and you care about AI enablement, you need to pave the way. And that means removing all the setup, removing the need to learn a ton of jargon, and removing the implicit requirement to stay plugged into Twitter. Once anyone can use AI, you start getting real creativity from people who can use these tools to superpower what they are good at. But that won’t happen if your team is still trying to wrap their head around when to use a SKILL and when to use an MCP, or even how to set up a PowerShell CLI. Just hand it off to someone else and get back to shipping for your customers.

Agentics is the study of how to use and reason about agents. If you are an expert in coding agents, or interested in learning more about agents, join our community slack. More articles here. Learn more about Nori at https://noriagentic.com/

well, as straightforward as powershell can be

people keep making the anthropic == apple comparison, and, like...